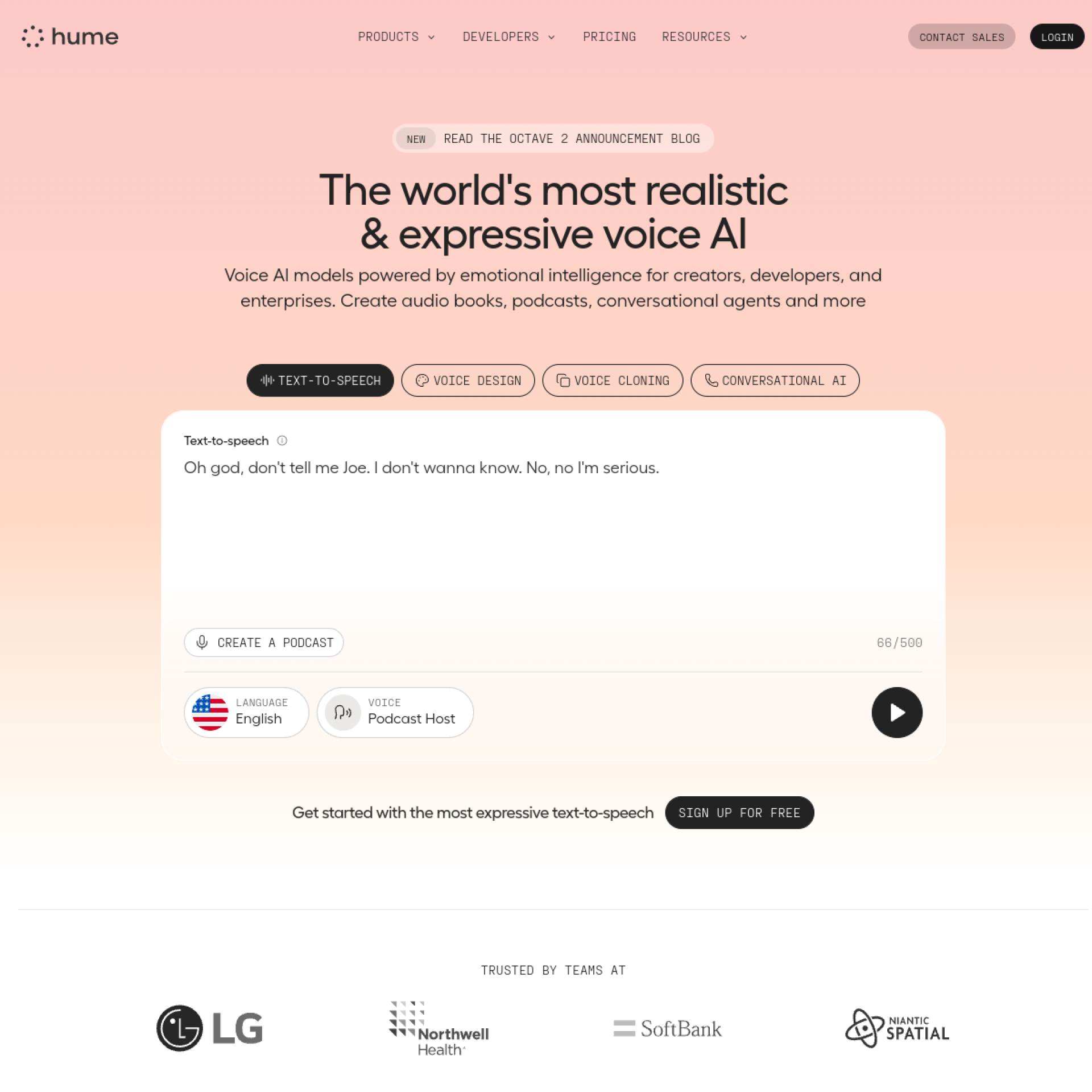

What is Hume AI?

Hume AI builds voice AI that understands emotional expression—not just what people say, but how they say it. The technology analyzes vocal characteristics like tone, pace, and inflection to detect emotions and respond appropriately. It's AI that recognizes frustration, confusion, or enthusiasm and adapts accordingly.

For developers building voice interfaces and conversational AI, Hume adds the emotional intelligence that makes interactions feel human rather than robotic.

[cta text="Build Emotionally Intelligent Voice AI"]Key Features

Expression Measurement

APIs analyze voice for emotional content. Detect 48+ emotional expressions from audio. Real-time processing enables responsive applications.

Empathic Voice Interface

Build voice agents that respond to emotional context. AI adapts tone and responses based on user emotional state. Natural conversation flow that acknowledges feelings.

Text Expression Analysis

Analyze written text for emotional content alongside voice. Multi-modal understanding of communication. Comprehensive emotional intelligence for applications.

Developer APIs

REST APIs integrate emotional AI into applications. SDKs for Python and JavaScript accelerate development. Documentation and examples support implementation.

Custom Training

Train models on domain-specific emotional expressions. Healthcare, customer service, and other verticals have unique expression patterns. Customization improves accuracy.

Pricing

Hume AI offers developer access with usage-based pricing. Free tier available for experimentation. Production pricing based on API calls and audio processed. Enterprise agreements available for high-volume applications.

[cta text="Build Emotionally Intelligent Voice AI"]Who Uses Hume AI?

Developers building conversational AI add emotional intelligence to voice assistants and chatbots. Better user experience through emotional awareness.

Healthcare applications use emotional analysis for mental health screening and patient interaction. Voice patterns indicate emotional states relevant to care.

Customer service platforms integrate Hume to detect frustrated customers and escalate appropriately. Emotional context improves resolution.

Hume AI vs Competitors

Hume AI vs Basic Speech Recognition

Standard speech-to-text captures words without emotional context. Hume adds the emotional layer that transforms transcription into understanding.

Hume AI vs Other Emotion AI

Various emotion AI providers exist with different approaches. Hume's focus on voice and research foundation differentiates from facial analysis competitors.

Final Verdict

Hume AI brings emotional intelligence to voice AI applications. For developers building conversational interfaces that need to understand not just words but feelings, Hume provides the technology to create more human interactions.

Rating: 4.4/5